In the ERA of AI, who owns the IP?

When you sign with an AI vendor, right after asking how they keep you safe at scale, ask who owns what you build on top of them. We believe in radical transparency at actAVA, so we show you exactly what you need to know before we even begin.

By Deon Metelski, CPO

Most healthcare buyers walk into AI vendor conversations with a security checklist and walk out without ever asking the second question: who owns the agents, prompts, and configurations you're about to spend a year building? The answer matters more than people think, and the standard vendor answer — "you own your outputs" — usually falls apart the moment you read the sublicense clause.

Two questions everyone in healthcare should be asking their AI vendor. Here they are in the order that matters.

How is my data actually secured?

Data has to move for agentic AI to work. An agent that can't perceive, reason, and act on real patient context isn't an agent — it's a chatbot with a stethoscope prop. So the real question isn't whether to share data with your AI vendor, it's whether the vendor's controls are worth trusting with PHI.

Here's what actAVA does, in plain terms:

AES-256 encryption at rest and in transit.

MFA and least-privilege access by default. Team members see the specific data required for their role and nothing else.

Continuous vulnerability scanning and third-party penetration testing on a fixed cadence. We find the weak spots before someone else does.

Network segmentation and secure API gateways to keep customer data isolated per tenant.

Scheduled key rotation — we change the locks on purpose, not when something breaks.

Background checks and mandatory annual security training for every employee, plus ongoing phishing and social-engineering exercises.

Full audit logging across all system activity, with retention that matches HIPAA and SOC 2 evidence requirements.

Tested disaster recovery and business continuity plans — not a document in a drawer, actually tested.

Third-party risk management for every subprocessor is reviewed annually.

actAVA maintains HIPAA compliance and SOC 2 Type II attestation, documented in our Trust Center.

That's the floor. It's table stakes for any serious healthcare AI vendor, and if your current vendor can't show you evidence for each of those bullets, that's your answer.

Who owns the IP for the agents you build?

This is the question most buyers skip, and it's the one that decides whether you're building equity in your own AI capability or renting it from someone who can change the terms later..

Our position is simple: you're using our platform (that's our IP) to create your own IP as agents. The platform stays ours. The agents are yours.

Customer data stays with the customer. All right, title, and interest in Personal Data — including PHI — processed through the actAVA platform remains with our customers. Full stop. We don't train on it, we don't resell it, we don't sublicense it.

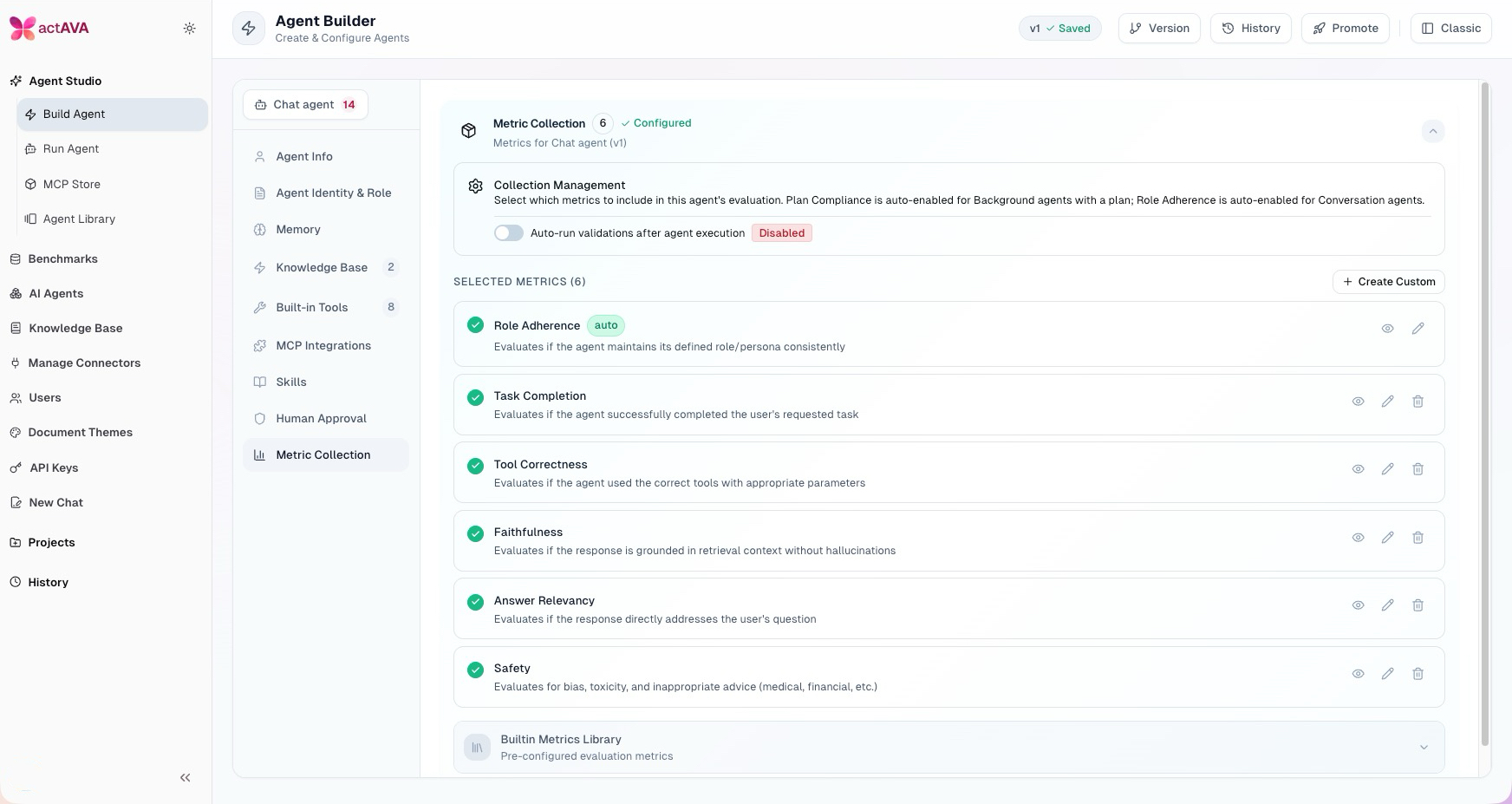

actAVA KORA|BLUE agents are exportable and belong to you. Every agent built in our BLUE agent-building and orchestration suite can be exported in a standard JSON format. The export contains the full configuration dump — system prompt, model tier, plan-and-tasks definition, tool list, MCP server bindings, tool allowlists, memories, HITL policies, temperature, max tokens, and multi-agent orchestration config. That's everything you'd need to reconstruct the agent's behavior on another platform. You won't get KORA's deep reasoning, evaluation, or safety harness if you leave — but the agent itself travels with you. actAVA does not use or resell customer agents. We maintain a starter library of common healthcare agents that customers can fork and modify, and that's where the sharing ends.

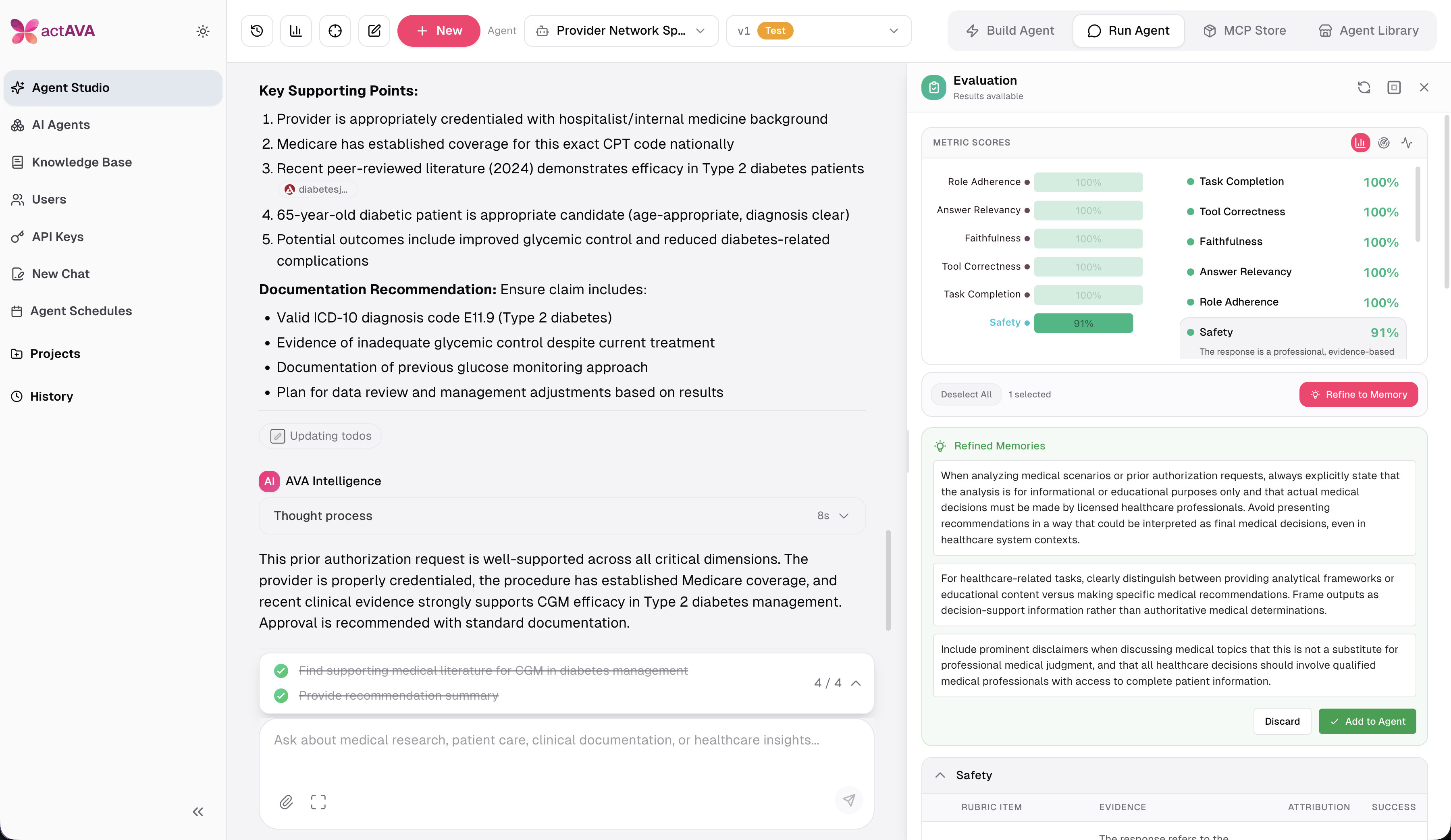

actAVA KORA|RED evaluation results are tied to the platform. Every agent is tested and monitored in our RED safety and remediation suite for bias, hallucination, cost, scalability, and regression. Those evaluation results are linked to the associated BLUE agent and surface in your dashboards while the agent lives on KORA. The RED metadata itself — the evaluation harness, the test corpus, the graded traces — is platform IP and can't be exported. When an agent leaves KORA, it leaves the safety harness behind. That's the trade-off, and customers should understand up front.

actAVA KORA|GREEN learning: the improvements transfer, the training signal doesn't. GREEN is our continuous-learning layer. Any reinforcement learning outcomes that you choose to fold back into your BLUE agent configuration — updated prompts, revised plans, new rules — go with you on export, because they live in the BLUE config. What doesn't travel is the underlying learning signal: the trajectory logs, the DPO preference pairs, the calibrated confidence scores, and the feedback traces. Those are how GREEN improves your agents over time, and they stay on KORA. The analogy I use: you keep the trained muscle, we keep the gym.

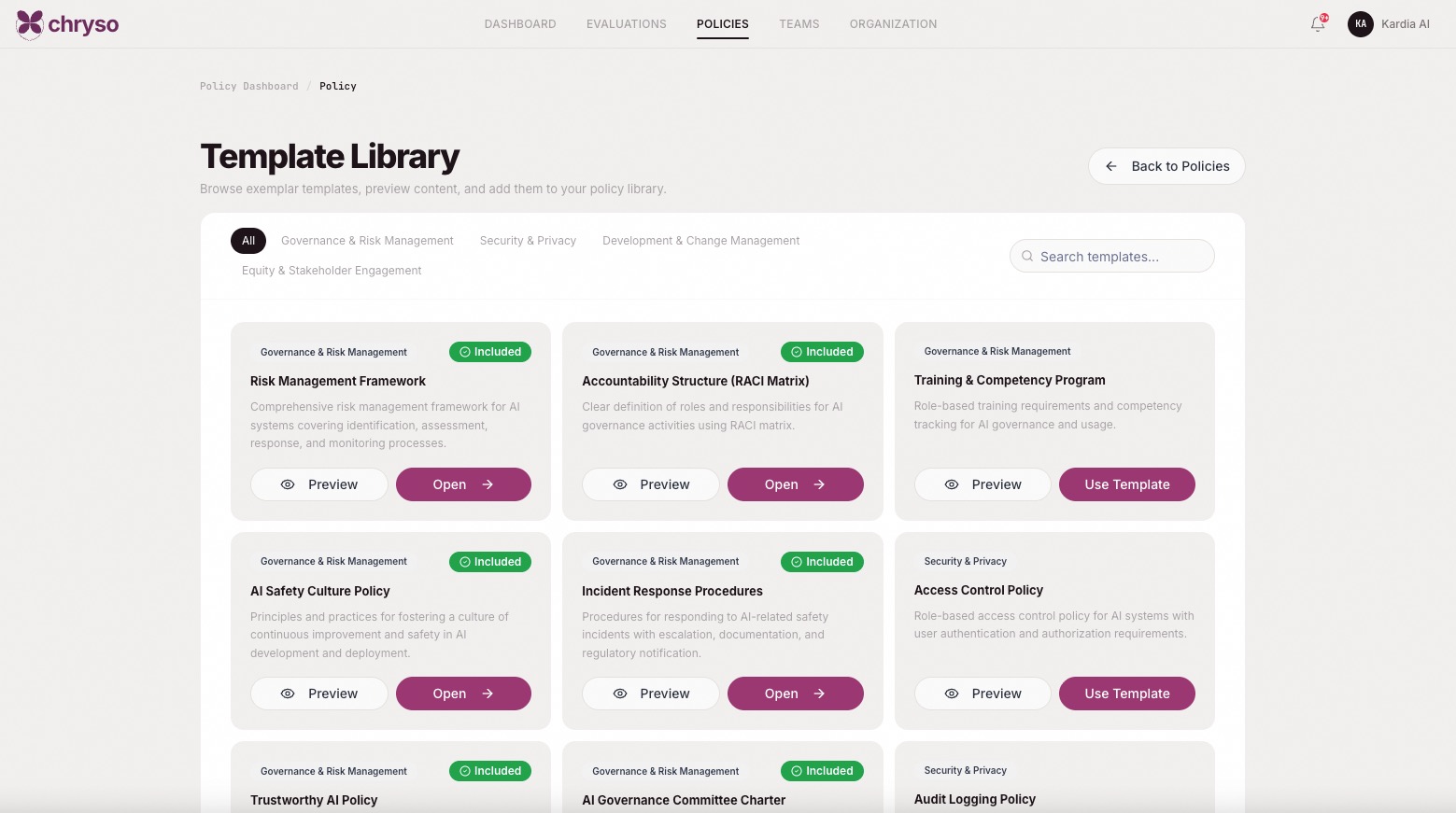

actAVA CHRYSO is the governance layer over all of it. This is the piece most vendor conversations miss. Chryso is our AI policy and compliance suite—an enterprise AI registry that tracks every agent, maps it to standards, and maintains the governance evidence. Chryso is how we manage vendors internally, and it's the product we sell to customers who need to manage vendors in their AI stack. If you're a health system managing a portfolio of AI tools from multiple vendors, Chryso is the layer that provides a single registry, a unified compliance view, and a single source of truth for AI governance. That's the other half of the IP story: owning your agents is necessary, but owning your governance over those agents is what keeps you out of trouble with regulators, boards, and counsel.

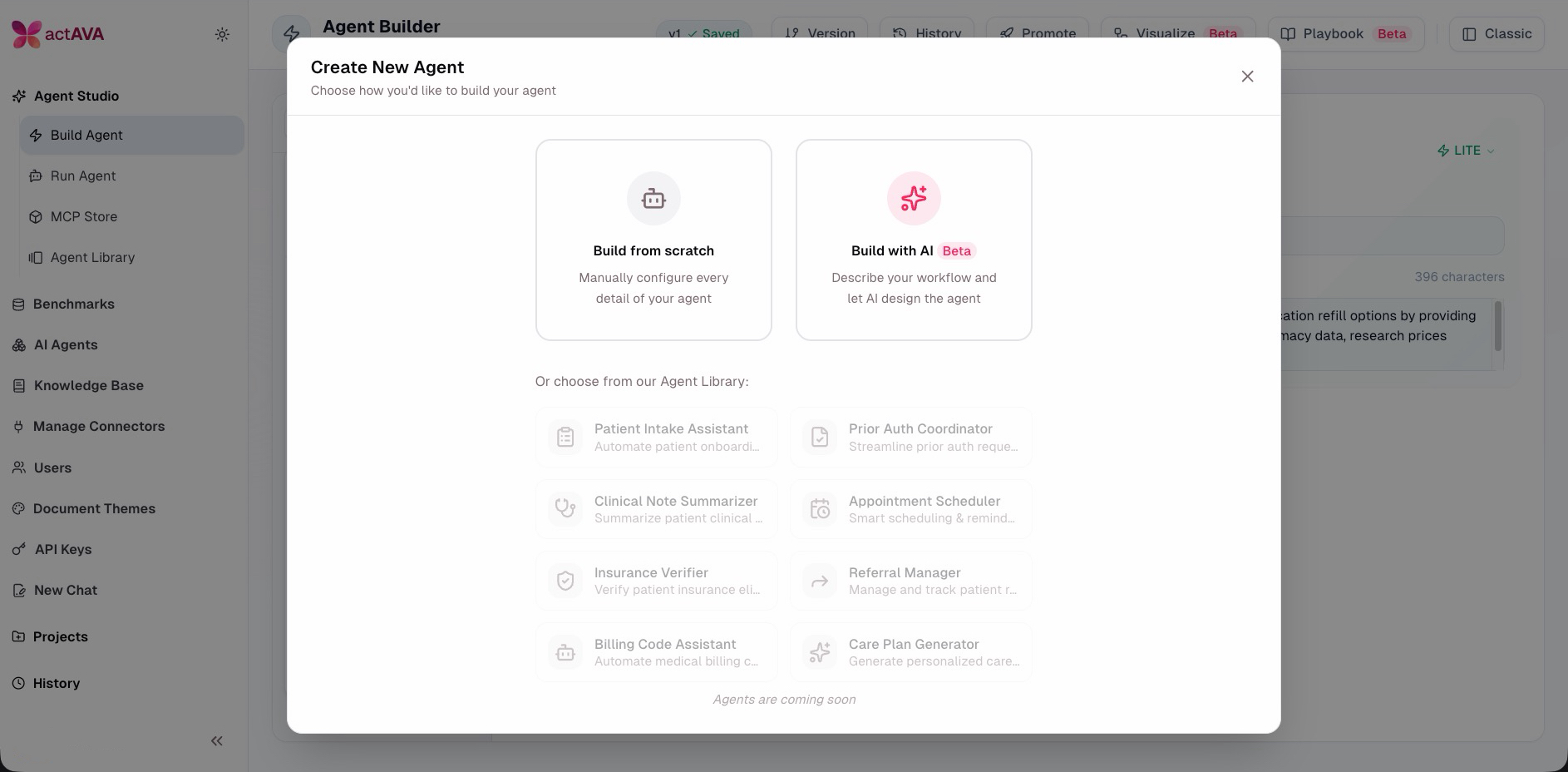

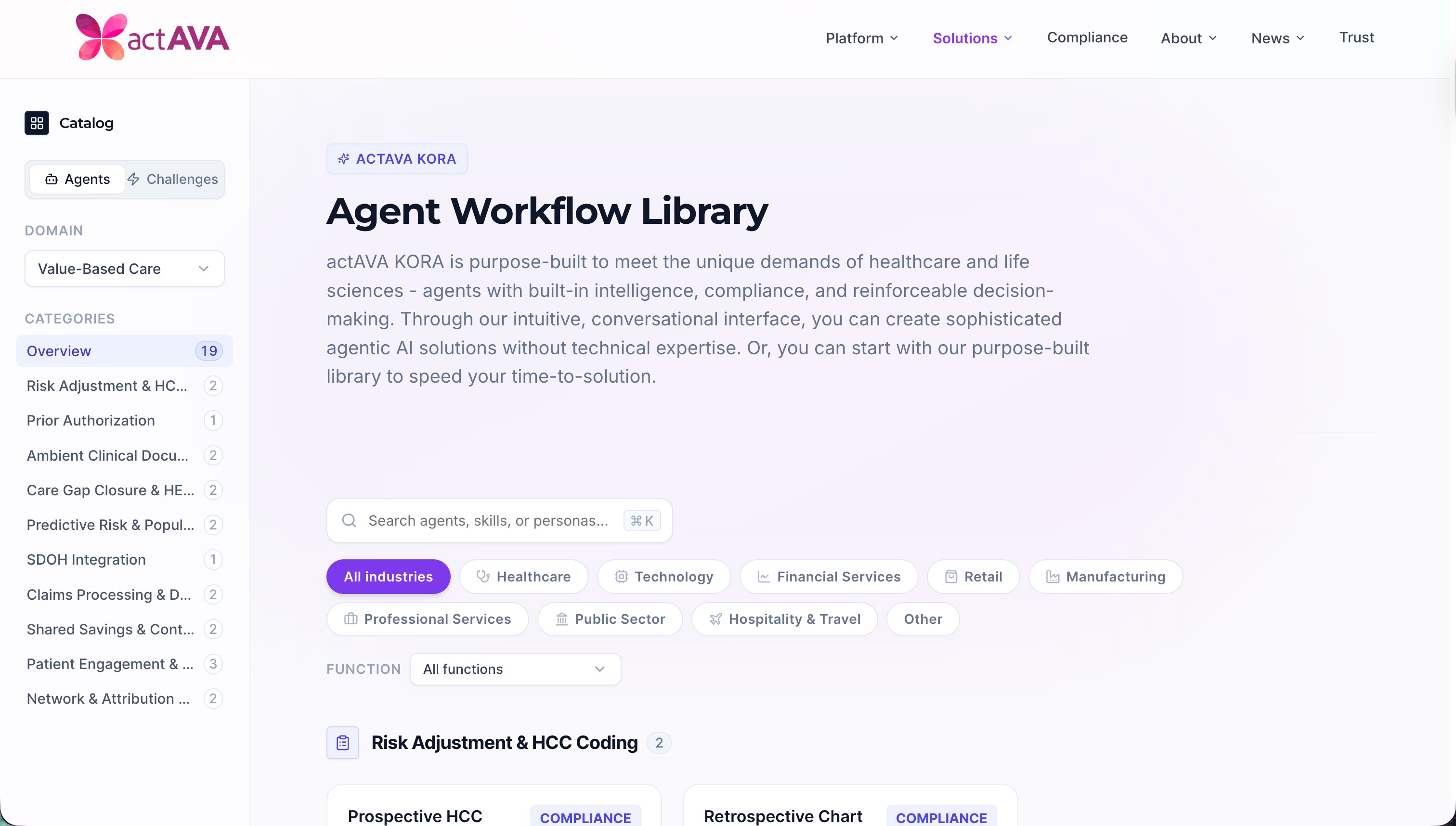

actAVA Agent Library: A specialized repository for pre-built AI agents, for use in secure, enterprise environments. Our customers get access to all of these baseline agents for use in their companies. The library contains a variety of "off-the-shelf" agents designed for specific healthcare and life science workflows and business functions. Instead of building from scratch, users can deploy our baselines for their products and team productivity and modify them to make their own. Any changes they make are their own IP.

The short version

When you're evaluating an AI vendor in healthcare, ask both questions and don't accept marketing answers on either:

Security: Can you share your SOC 2 report, HIPAA compliance documentation, and penetration testing cadence? What's in your subprocessor list? What happens to my data if I terminate?

IP and governance: Who owns the agents I build? Can I export them? In what format? What's your training policy on my inputs and outputs? And how do I manage the governance of everything I build on your platform?

If your vendor stumbles on either, that's your answer.